Abstract

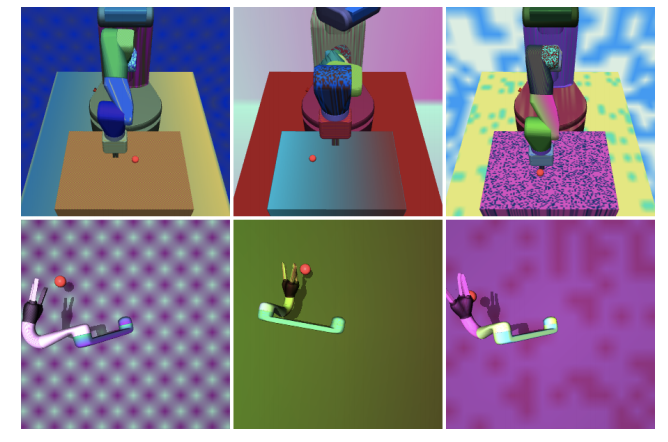

Deep reinforcement learning has the potential to train robots to perform complex tasks in the real world without requiring accurate models of the robot or its environment. A practical approach is to train agents in simulation, and then transfer them to the real world. One popular method for achieving transferability is to use domain randomisation, which involves randomly perturbing various aspects of a simulated environment in order to make trained agents robust to the reality gap. However, less work has gone into understanding such agents - which are deployed in the real world - beyond task performance. In this work we examine such agents, through qualitative and quantitative comparisons between agents trained with and without visual domain randomisation. We train agents for Fetch and Jaco robots on a visuomotor control task and evaluate how well they generalise using different testing conditions. Finally, we investigate the internals of the trained agents by using a suite of interpretability techniques. Our results show that the primary outcome of domain randomisation is more robust, entangled representations, accompanied with larger weights with greater spatial structure; moreover, the types of changes are heavily influenced by the task setup and presence of additional proprioceptive inputs. Additionally, we demonstrate that our domain randomised agents require higher sample complexity, can overfit and more heavily rely on recurrent processing. Furthermore, even with an improved saliency method introduced in this work, we show that qualitative studies may not always correspond with quantitative measures, necessitating the combination of inspection tools in order to provide sufficient insights into the behaviour of trained agents.